Introduction

The transition from cgroup v1 to cgroup v2 has brought significant improvements to Linux resource management, but it also introduced challenges for Kubernetes workloads. A critical issue emerged in how CPU shares are converted to CPU weight, affecting priority allocation and configuration granularity. Today, we are pleased to announce an improved conversion formula that addresses these longstanding problems, ensuring Kubernetes containers maintain proper CPU priority and finer control over resource distribution on cgroup v2 systems.

Background: From CPU Shares to CPU Weight

Kubernetes was initially designed around cgroup v1, where CPU shares were calculated directly from a container's CPU request using the formula: cpu.shares = milliCPU × 1024 / 1000. Here, 1024 represents the default cpu.shares value in cgroup v1, not a millicore conversion factor. For example, a container requesting 1 CPU (1000m) received 1024 shares, while a 100m request yielded 102 shares.

With the shift to cgroup v2, the concept of CPU shares (range 2–262,144) was replaced by CPU weight (range 1–10,000). To bridge this gap, KEP-2254 introduced a linear mapping formula: cpu.weight = 1 + ((cpu.shares - 2) × 9999) / 262142. This straightforward translation converted the old share range to the new weight range, but it soon became apparent that the linear approach introduced two significant drawbacks.

The Problem with the Old Conversion

1. Reduced Priority Against Non-Kubernetes Workloads

In cgroup v1, the default CPU share is 1024, meaning a container requesting 1 CPU had equal priority to system processes outside Kubernetes. Under cgroup v2, the default CPU weight is 100. However, the old linear formula converts a 1 CPU request to approximately 39 weight—less than 40% of the default. This effectively demotes Kubernetes workloads, making them lower priority than many system daemons. In environments with numerous background services, this could lead to severe CPU starvation for containerized applications.

Example: A container requesting 1 CPU (1000m) gets cpu.shares = 1024 in cgroup v1, matching the default. In cgroup v2 with the old formula, it receives cpu.weight ≈ 39, far below the default 100. Kubernetes workloads thus lose their competitive standing against non-Kubernetes processes.

2. Unmanageable Granularity for Sub-Cgroups

The linear mapping produces very low weight values for small CPU requests, making it impractical to create finer sub-cgroups within containers. This limitation hampers administrators who want to distribute CPU resources among multiple processes inside a single container—a feature that will become more accessible with future enhancements (see KEP #5474).

Example: A 100m CPU request translates to cpu.shares = 102 in cgroup v1, which is manageable for sub-cgroup configuration. In cgroup v2 with the old formula, the same request yields cpu.weight ≈ 4—too low to allow meaningful subdivision. This lack of granularity restricts advanced resource management strategies.

A New Conversion Approach That Fixes Both Issues

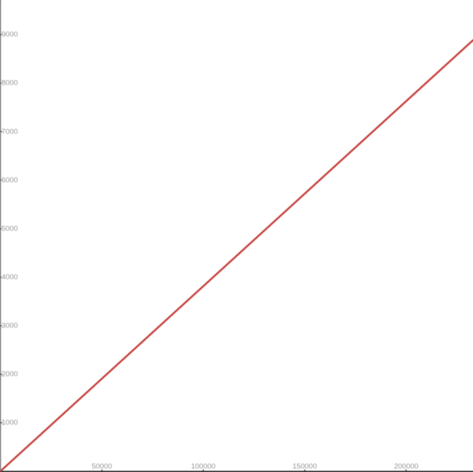

The improved conversion formula directly addresses the two core problems. First, it recalibrates the mapping so that a typical 1 CPU request (1000m) results in a cpu.weight close to the default of 100, restoring equal priority between Kubernetes workloads and non-Kubernetes processes. Second, it provides better granularity for low CPU requests, enabling administrators to define sub-cgroups with meaningful weight values.

While the exact mathematical formulation is beyond the scope of this announcement, the new approach abandons the simple linear scaling in favor of a more balanced curve. This ensures that default-priority requests map to the default weight, and that small requests retain enough weight for internal subdivisions. The result is a smoother, more predictable transition from cgroup v1 to v2 without sacrificing performance or configurability.

Conclusion

The move to cgroup v2 is essential for modern Linux systems, but it must not come at the cost of degraded CPU priority for Kubernetes workloads. The new conversion formula corrects the unintended reduction in priority and restores the granularity needed for advanced resource allocation. We encourage all Kubernetes administrators running on cgroup v2 to update their systems to benefit from this enhancement. For further details, refer to the upstream Kubernetes documentation on cgroup v2 conversion and the related issue tracking.